Alt-ering the Art Institute of Chicago

May 12, 2023

Hackdays at Cogapp are always enjoyable creative chaos. This time, we had the pleasure of cooperating with the Art Institute of Chicago (AIC). I teamed up with James Waterfield to build a browser extension for the AIC that would replace any collection image with an AI-generated image.

Alt-ify me: a browser extension to AI-replace images based on their alt text

My day-to-day work involves a lot of accessibility testing and for the hackday I wanted to take a playful approach to this by generating artworks based on the alt text for an image. Alt text is short for alternative text. It serves as a substitute for the image and helps assistive technologies like screen readers understand the image content so they can present it to their users.

Our extension with the catchy name AIALTAIC is written in plain Javascript. It adds a button with the name “altify me” to the page when a user views any collection object at the AIC. When the button is clicked, the image is replaced with it’s machine-generated counterpart. Another button-click will bring the original image back. I created the browser extension and James worked on the image generation in the backend.

The idea was simple but the journey had quite a few bumps and potholes.

Originally, we were inspired by Midjourney, an AI image generator that has in recent weeks really impressed with its realistic depictions of people and things. Sadly, Midjourney doesn’t have an API and does not allow for programmatic interaction, so we turned to Replicate, another AI art generator which has an excellent API which communicated well with our browser extension and backend.

The resulting images were mostly good though at times decidedly “off.” Limbs, and especially hands, would disappear into the background or morph into other objects. Sometimes the AI ignored specific instructions, like the placement of objects inside the image. And unlike Midjourney, the faces it generated were not particularly human. We were able to mitigate some of these issues to a degree by adding the artist name and the medium to our prompt rather than just the alt text.

Another problem we encountered was that the Replicate API incorrectly responded with an error saying the prompt was NSFW even though the prompt was very clearly SFW. For example, we saw this message whilst generating an image based on the prompt “Oil on canvas”. When we resubmitted it, the AI worked fine.

But most of the issues we faced are relate to the machine model and the fact that this tech is still young.

From alt text to art tech: our image generation backend

Once we had decided on Replicate for our image generation, the biggest remaining challenge was the fact that AI image generation is still way too slow and resource-intensive to be handled via a browser extension. This is where James stepped in:

We needed a solution which allows this part to be handled somewhere else. To do this we used AWS so that this bit could be handled entirely within the cloud. Once the browser extension has extracted the width and height of the image (so we can generate an image with similar dimensions) and the artwork ID (taken from the URL), it sends this information to an API endpoint on AWS. This endpoint will trigger a lambda function which is a short running piece of code that runs in the AWS cloud. The output of this lambda function is an image uploaded to S3 which is Amazon’s storage solution.

The lambda function is written in python and firstly checks if the image already exists on S3. The output images are all named with the relevant artwork ID. If an image already exists in S3, the URL of the image is returned in the API response. If it doesn’t exist then the artwork ID is used to query the Art Institute of Chicago’s API. The response from this query will give us the name of the artist or artists responsible for the artwork, the medium of the artwork and the alt text of the image. This information is then used to create a prompt of the form:

A <medium> in the style of <artist name(s)> of <alt-text>

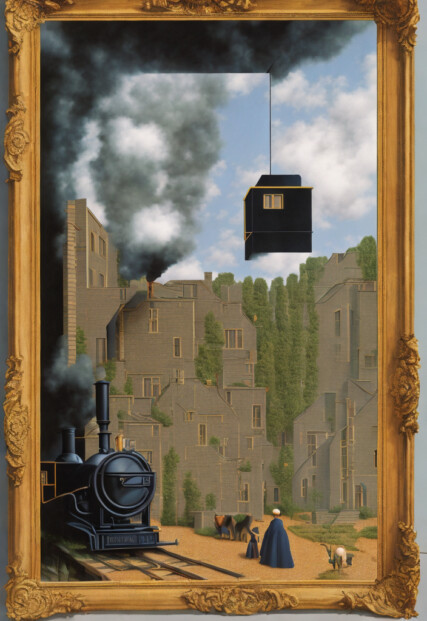

For example, we extracted the following prompt for Rene Magritte’s Time Transfixed:

A Oil on canvas in the style of René Magritte of Surrealist painting of miniature train exiting fireplace mid-air, large mirror over mantle.

This was the result:

AI recreation of Magritte’s surrealist painting Time Transfixed. The frame is a nice embellishment the AI added — maybe because of the mention of a mirror above a mantle?

For comparison, here is the original:

This prompt is used in the image generation using the replicate API. Replicate is a service which lets you run machine learning models in the cloud and retrieve the output in one call. We used its python interface and the midjourney diffusion model to create the image. As we wanted to produce higher quality output we increased the number of inference steps that the model runs to 150.

Before the prompt was sent, we calculated the closest possible width and height of image out of the allowed width and height values without exceeding the maximum area allowed by the model. This was done so the aspect ratio of the image could be maintained to match the original artwork.

The output of the replicate model is a url to the generated image. This image was then uploaded to S3 so that it can be referred to in future checks for the same artwork.

The infrastructure for this part of the project was handled with a combination of terraform and serverless. Terraform allows cloud infrastructure to be stored as code so it can be tracked and adjusted in the future more easily than digging through a bunch of internal documentation which is always a pain to read and write! We used Terraform to bring up an S3 bucket with the necessary permissions to allow web access to the images and also to store any secrets in AWS itself so that the lambda function has access to them. Serverless was used for the bulk of infrastructure as it specialises in lambda functions. Serverless allowed us to set up an API that triggers a lambda function. All of this can be configured in a relatively simple yaml file. All we needed to do was write the lambda function itself. Serverless handles the deployment and keeps track of these services for us.

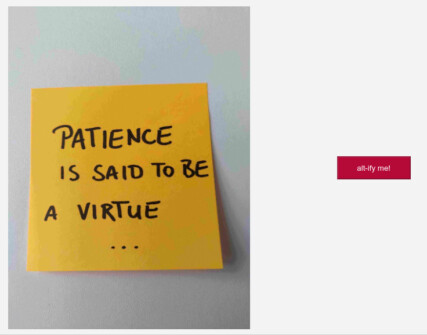

Alt-ernative reality: the user experience

Now that we have an AI-generated image stored in our S3 bucket, all the browser extension has to do is retrieve the image and replace the original image on the page with the new one. It is unfortunate that this process does not take a moment, or 10 seconds, or even 60 seconds. It takes a couple of minutes at least. To make the waiting process less cumbersome — and to prevent people from repeatedly clicking the button and triggering lambda functions to run — we designed a beautiful holding image.

maybe in a future version of this, we will have a more talented (AI?) approach to a holding image

Once an image has been generated, future button clicks will produce the replacement image within seconds. It would have been more fun to see different iterations of the same prompt producing different alternative images, but under the current circumstances, this is just not practical.

A note on accessibility

Maybe projects like this can help highlight the importance of web accessibility — in this case the art of writing good alternative text for images. In our experiments we discovered at least one case of an image that did not resemble the original in any way. When we examined the alt text, it turned out to be a very generic and vague statement. Even the best AI won’t be able to display an image of something it does not know about. And neither can a human.

The alt text “A work made of steel, iron, brass, leather, and textile” cannot possibly convey to anyone (person or AI) what is in the image. Here is our AI-generated attempt at a recreation:

The AI generated an intriguing and somewhat mysterious image of leather and metal parts

Would you have guessed that the original is actually a model of a 16th century horse and rider in metal armour?

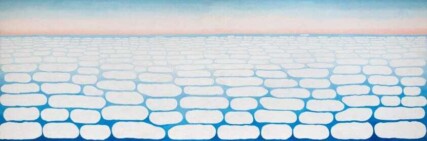

Similarly, the alt text “A work made of oil on canvas” is obviously too vague for describing a painting. Interestingly, the AI still managed to generate something interesting in Georgia O’Keeffe’s style. The painter is best known for her paintings of flowers and blue skies:

beautiful flowers in a style not unlike many of O’Keeffe’s paintings

In this case, however, the image was meant to be based on O’Keeffe’s late and very abstract painting Sky above Clouds IV:

nothing like flowers — a very abstract sky with clouds as seen from an airplane

Altogether, the hackday with the AIC was great fun. Not only do they provide an easy to use and well documented API, the people at the Institute were also really enthusiastic and supportive. We enjoyed exploring the capabilities of AI-image generation and overcoming (or in some cases just observing) its challenges and limitations. We are looking forward to this technology being further developed and maybe in the future we can even publish a user-ready version of a similar app to work with this and other art collections.

In a future variation of this hack, it could be fun to let people guess which version of an image is the original artwork and which the recreation. Right now, using our Replicate model, it is fairly easy to identify the recreation but if we throw in what Midjourney produced with the same prompt, things can get a lot more interesting in possible future hacks:

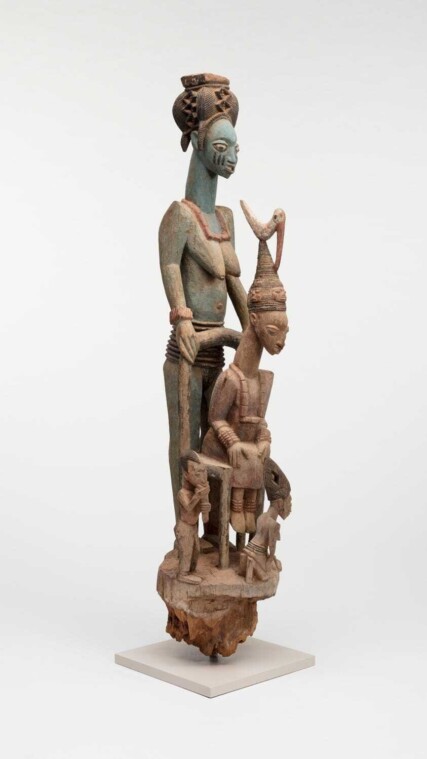

In the above three images, you can see Olowe of Ise’s Veranda Post (Òpó Ògògá), Yoruba King and Wife alongside two recreations, one created by Replicate and one by Midjourney using the same prompt: “Wood on pigment in the style of Olowe of Ise of Wooden sculpture of seated king surrounded by family.” They are all images of wooden objects depicting human figures in a variety of arrangements. Have a guess and then identify the original on the AIC website.