When AI is not enough

How four robots and a human worked together to catalogue historic documents for the British Library.

October 9, 2023

Machine Learning — often misleadingly referred to as “AI” — has been all the rage for a while now, only recently succeeded in the hype stakes by Large Language Models (such as ChatGPT). The claim is that these techniques can perform tasks that would otherwise require laborious human effort; or that would require substantial efforts in procedural programming. Indeed, many of the results are very successful; but frequently the public demonstrations obscure some human intervention to select and refine the outputs.

One of the unambiguous successes is in the field of Optical Character Recognition (OCR). Decades of painstaking refinement of software to recognise text from images has been overtaken in just a few years by machine learning (ML) techniques, especially “AutoML”, which is typically trained on very large quantities of data gleaned from across the web.

This post describes a real world project in digital humanities, in which achieving the desired output required a combination of different “AI” techniques, as well as conventional procedural programming — and with just enough human refinement.

Once upon a time, the British Library contacted us with an idea. They were in the process of digitising a series of volumes of Parliamentary Acts, printed in the 18th and 19th centuries; and wanted to know if we could generate an index to the acts… automagically.

These were all “Local and Private Acts”. In the 18th century, all sorts of activities — including divorce — required an act of parliament. Local Acts were often to authorise such things as building or repairing a road or bridge, and establishing a toll to pay for the work.

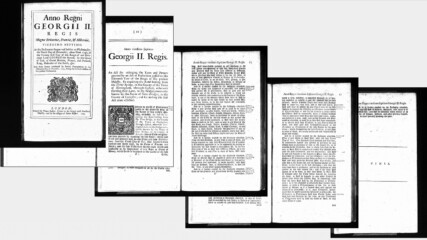

The printed Acts follow a standard structure, with two title pages which between them have the content we were looking for, the first with the royal crest and the regnal year (the year expressed in terms of how many years into the reign of the current king, in Latin). The remainder of the pages are densely printed text, ending with a single line “FINIS”.

Pages of a sample Private Act

The original requirement was to extract the title, date, printer and place of publication from each act, recorded against the frame number. OCR could in principle extract the text — although with varying levels of accuracy — if we knew which bits of text to look for.

Based on the samples supplied, we tried various experiments and came up with a cunning plan, involving two different approaches in parallel. On the one hand, we tried training visual analysis software to classify pages into the first and second kind of title page, and the plain text pages. On the other hand, we tried analysing the OCR, by combination of font size and familiar strings (such as “Georgii II. Regis.” — fortunately all the acts were in the reigns of George I, George II, and George III) to identify the first and second title pages, from which all else followed.

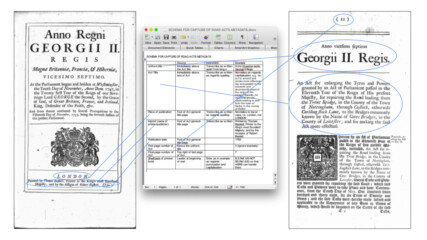

Experimenter’s workbench — testing heuristics to identify title pages

Neither of these methods was 100% successful. However when we obtained from each a confidence score, and then combined these confidence scores, we were able to correctly identify the title pages of all the sample pages. Please imagine that you’re hearing ominous music in the background while you read the words “the sample pages”.

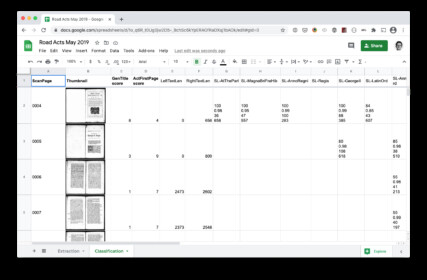

To assess our models, we used Google Sheets. I’m a huge fan of Google Sheets because they’re easy to manipulate programmatically.

Putting the overall output in Google Sheets made a really nice way to visualise tabular data. It saved the effort of writing a display for the outputs of throw-away proof-of-concept code. And there are some particular advantages:

- You can easily version the data, so you can go back and see if you’ve made things better or worse.

- If your images are available online, for example through a IIIF server, you can construct formulas to display them in cells — in this case based on the number in the first column — and save network and cycles by asking the IIIF server to send a thumbnail.

- You can do calculations in the spreadsheet, when that’s easier than modifying your code. In this case, the numbers towards the right (in the image above) are the raw output from the analysis, reporting confidence in finding various elements expected on the two special kinds of page that we’re trying to identify. Columns C and D are formulas in Google sheets, representing an overall score of how likely each page is to be one of those.

Thus we could keep tuning the heuristics, and running them again across the full set of images, and then review the Google sheet to see how that went.

By plugging in the output from the increasingly tuned visual recognition model, we could see how we were doing with that — and then demonstrate that a simple sum of the outputs from the two systems was conclusive.

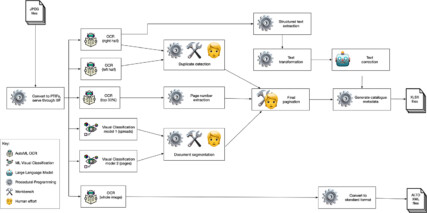

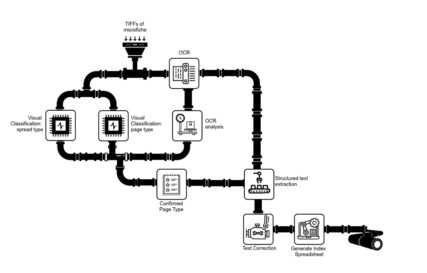

So this was our cunning plan, expressed in the medium of plumbing:

I had a ten-hour train journey across Europe to a conference, and amused myself by creating this diagram.

We told our contact at the Library that yes, we thought we could achieve this.

Almost a year later, when the hard drive arrived from the Library team containing some 20,000 images, digitised from some 30 reels, we were all in lockdown. But this turned out to be an excellent lockdown project!

In the interim, there had been various additions to the requested data. All of them sounded simple to us, and we cheerfully agreed.

The basic requirement was to locate the start of each act in the sequence of images, and extract the act title, date, publisher and so on from the two title pages. But we were also asked to report the page number in the book of each act, and the number of pages in each act. At first, this seemed like it should be a trivial matter of subtracting the page number of one title page from the page number of the next one. Actually this turned out to be a real challenge!

The huge advantage we had was Cogapp Refinery Services (CRS). This is a system we’ve built up over a number of projects. CRS allows us to define a cascade of operations on files (usually, but not necessarily, images) which will then be processed automatically as soon as files are uploaded. This allowed us to construct our pipeline along the lines of the plumbing-themed image above (as it turned out, the plumbing got a lot more complicated) and then push the scanned images through it without having to initiate all the steps manually.

We set up a pipeline to carry out the steps above:

- converting each image format and making it available at a IIIF endpoint;

- sending each image to a visual classification model, and also to OCR;

- using procedural programming analysing the OCR to score each page as being a title page;

- then combining the scores from this with the scores from the visual classification model, to definitively identify the title pages for each act.

IIIF is the International Image Interoperability Framework; a protocol which makes it easy to access an image over HTTP with a scale and crop defined on the fly.

Applied across all the source images, this was, inevitably, not quite as successful as the initial experiments on a small sample had suggested. However, we refined both the analysis of the OCR, and the visual classification system.

Our refinements included spitting each frame (which was originally a two-page spread in a book) and sending each half separately for visual classification. Because CRS had made all the images available via IIIF, this was trivial to implement. For example, we could just replace “/full/” with “/pct:0,0,50,100/” in a URL to send the left half of the image for analysis instead of the whole.

We also added scoring based on the sequence. Each act should begin with a ‘general title page’, followed by a blank page, followed by an ‘act title page’. So we could amplify or diminish the score for each of the title pages according to the scoring of their neighbour.

Through this we were able to accurately identify the frames corresponding to the start of an act; and although the OCR wasn’t wonderful, because of the relatively consistent nature of the title pages, we got a reasonable result in extracting each of the requested catalogue elements.

However, the requirement that we thought would be trivial — counting the pages in each act, and identifying the page numbers — turned out to be trickier. There were two issues:

- We discovered that some frames were essentially duplicated, presumably by accident. (Surprisingly, it seemed that not only had some spreads been photographed twice, but sometimes it also appeared that a single frame of the microfilm had been digitised twice.) Additionally, some spreads of the book were deliberately photographed twice, first blanking out the right-hand page, then again blanking out the left-hand page. So while in general one digitised frame represented two pages in the book, sometimes it only represented one; and sometimes (when it was a completely duplicated spread) it didn’t represent any increment to the page number at all. A solution to this might have been to use the page numbers to identify duplicates, if we’d been able to get page numbers reliably from the OCR — but see next paragraph.

- Page numbers were printed in a standard location, at top left and top right of facing pages; but only on some pages (none on title pages), and additionally the OCR made quite a lot of errors on the page numbers. (Errors in digits are often more serious than in words, because one or two typos in a word normally don’t obscure which word it is.) A solution to this might have been simply to use dead reckoning for pages without a number (i.e. keep counting the un-numbered pages until we get to the next numbered page), if every frame represented two consecutive pages of the book — but see previous paragraph.

If there had been only one of these issues, it would have been relatively easy to deal with! But each confounded an easy solution to the other.

Using an ML vision service to identify duplicates was ineffective, because two pages full of printed text looked very similar. Instead, we implemented a pass to identify duplicate spreads based on comparing the OCR. This gave a score based on the Levenshtein distance of the text of each consecutive pair of spreads. This was fairly effective, but there wasn’t “clear water” between the two positives. In any case, spotting duplicates wasn’t enough, because of the spreads which were photographed deliberately with facing pages covered up.

Enter the humans!

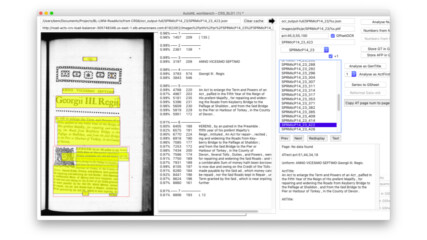

But first, enter the tooling. I’m a great believer in building tools for humans to work on data. Two of my favourite materials to build tools out of are IIIF and Google Sheets. The latter obviously has the benefits of requiring zero set up or hosting (our friends in Mountain View do that for us), of auto-saving, and allowing simultaneous multi-user access. That makes Google Sheets great for allowing people to collaborate over data. What makes them great for allowing people and computers to collaborate is that there is a very comprehensive API (Application Programming Interface). The icing on the cake is that a Google Sheet can display images from a IIIF server, including using formulas, to select the source image and zoom level, and to select a detail from it.

When all the automated processes completed for a digitised reel of microfilm, they generated a new Google Sheets document, with several sheets.

Sheet 1 displayed just the candidates for duplicate spreads (the ones with a relatively high score). Each row showed a pair of frames, at a scale we established was helpful, sorted by ‘duplicate’ score, and with a column to confirm whether in fact they were duplicates. It was then a quick and easy job to eyeball the pairs and mark each as duplicate or not. In case it wasn’t clear enough, each image in the sheet was linked to view the digitised spread at higher resolution, allowing the human operator to confirm completely.

Sheet 2 was used to correct page numbers. Each image was shown in a small thumbnail (this helped identify blank, obscured, and title pages), with the best guess for the page number. One column displayed the page number as deduced from OCR. Another showed the running ‘dead reckoning’, taking into account the determined duplicate pages. A third highlighted anomalies, with cells coloured to make these more obvious. Thus it was simple for a human to scroll down the sheet, see where the sequence went wrong, and correct it.

One more thing

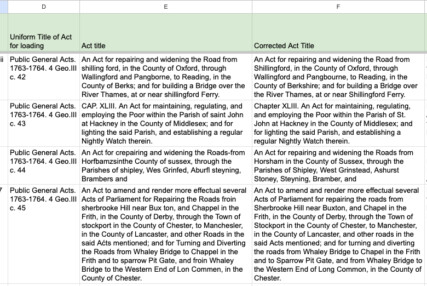

The final step involved one more “robot”. As noted above, the 18th-century printing of the acts (in archaic fonts, with sometimes rough printing on thin paper) was not the ideal source for the AutoML OCR, which did a mostly decent job but was sometimes not great.

However, this is where the Large Language Models such as ChatGPT come in. These are sometimes dismissed as “autocorrect on steroids” — generating text by predicting what word is likely to follow an existing sequence of text, and entirely capable of making up (plausible-sounding) nonsense. But in this case, autocorrect is just what we wanted!

I tested the idea by simply pasting one of the Act titles into the ChatGPT UI, preceded by the prompt “The following text was scanned from an 18th century document, and then transcribed by OCR. Please correct it to what you think the original text would have been”. (Fun fact: it’s important to be polite to ChatGPT — you get better results). The result was immediately promising! We then used the OpenAI “completions” API to run all the act titles through ChatGPT, with the same prompt, putting the output, of course, into yet another column in the Google Sheet.

Conclusion

And this is how four robots (AutoML OCR; ML visual classification; a Large Language Model; and some old-fashioned procedural programming) formed a team with humans using a custom workbench, to index 20,000 frames of 18th Century Parliamentary Acts!